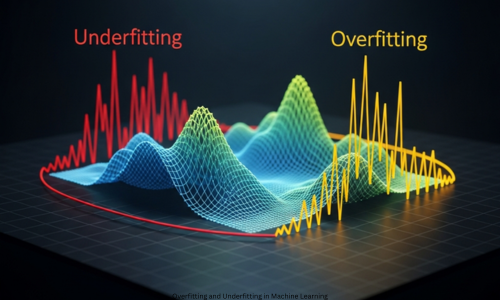

Overfitting and Underfitting in Machine Learning. Machine learning powers modern tech. It drives recommendation engines, fraud checks, health scans, and self-driving cars. Still, success goes beyond picking the best algorithm or grabbing big data. The top hurdle in machine learning is balancing too much learning with too little.

This balance hinges on two key ideas: overfitting and underfitting.

Overfitting and underfitting rank as top issues in machine learning. They shape how well a model works on fresh data. Anyone with data newbie, student, researcher, or pro must grasp them. This full post dives deep. We cover what they mean, why they strike, how to spot them, their fallout, and fixes to stop them. You will end up with solid skills to craft steady machine learning models.

Model Generalization Basics

Grasp generalization first, before overfitting or underfitting. Generalization means a model shines on new data. It skips rote memory of training facts. It grabs core patterns that fit broad inputs. Overfitting and Underfitting in Machine Learning.

Poor generalization splits into two traps:

- Overfitting: Model grabs training data plus noise.

- Underfitting: Model misses data’s core patterns.

Each leads to weak results, just in varied ways. Overfitting and Underfitting in Machine Learning.

Overfitting Explained

Overfitting hits when a model hugs training data too tight. It soaks up true signals, noise, flaws, and chance wiggles too. Thus, it aces training but flops on new data. Overfitting and Underfitting in Machine Learning.

Overfitting in Plain Terms

Picture cramming exam answers without grasping ideas. You nail the practice sheet. But tweak the questions, and scores crash. Overfitting does that. The model parrots data, skips broad rules. Overfitting and Underfitting in Machine Learning.

Signs of Overfitting

Overfit models share traits:

- Sky-high training scores.

- Weak validation or test scores.

- Overly complex setup.

- Jolts from tiny data shifts.

- Lousy real-life results.

- They stay brittle, not tough.

Overfitting Causes

Several factors spark overfitting:

Complex Models

Parameter-heavy setups like deep nets or steep polynomials match any data.

Tiny Datasets

Scarce data lets noise pass as signal. Overfitting and Underfitting in Machine Learning.

Messy Data

Flaws, oddballs, glitches push memorization.

Excess Features

Useless or repeat traits boost fit risks.

Overlong Training

Too many rounds etch in fine details.

Overfitting Samples

Sample 1: Polynomial Fits

Steep curves hit every training dot with zero slip. Yet they bomb on next points.

Sample 2: Decision Trees

Deep trees sort training perfect. New data stumps them.

Sample 3: Neural Nets

Big nets on slim data overfit sans controls.

Underfitting Defined

Underfitting strikes with models too basic for data patterns. It skips learning from training sets. Such models tank on training and tests alike. Overfitting and Underfitting in Machine Learning.

Underfitting Simply

Think basic math to cover wild equations. Push hard, but depth stays lost. That’s underfitting.

Underfitting Traits

These models display:

- Meager training scores.

- Meager validation scores.

- Heavy bias.

- Too-easy rules.

- Blind to patterns.

They flop on real jobs.

Underfitting Triggers

Basic Models

Straight lines on twisty data.

Short Training

Quits early, skips key learns.

Weak Features

Skips vital traits.

Heavy Controls

Tight rules block real fits.

Underfitting Cases

Case 1: Linear on Curves

Straight fits can’t hug bends.

Case 2: Short Trees

Few-branch trees miss twists.

Case 3: Thin Nets

Slim layers or nodes learn scant.

Bias-Variance Balance

Overfitting and underfitting tie to bias-variance trade.

Bias

Bias flows from simple views. High bias sparks underfitting.

Variance

Variance comes from data shakes. High variance fuels overfitting. Strong models hit sweet spot on both. Overfitting and Underfitting in Machine Learning.

How to Spot Overfitting and Underfitting

Training vs. Validation Error

- Overfitting: Low training error. High validation error.

- Underfitting: High error on both.

Learning Curves

Plot training and validation scores. Look for key patterns.

Cross-Validation

Test model steadiness over varied data splits.

Ways to Stop Overfitting

Add More Training Data

Extra data cuts down on rote learning. Overfitting and Underfitting in Machine Learning.

Pick Key Features

Drop features that do not matter.

Use Regularization

Punish models that get too complex. Try L1 or L2.

Early Stopping

Halt training when validation scores stall.

Dropout

Turn off some neurons at random. Best for neural nets. Overfitting and Underfitting in Machine Learning.

Data Augmentation

Make fresh training samples.

Ensemble Methods

Blend several models. Lowers variance.

Ways to Fix Underfitting

Boost Model Power

Pick models that capture more details.

Add Useful Features

Bring in features that hold real info.

Cut Back Regularization

Give the model room to grow.

Train for More Steps

Allow enough time to learn.

Feature Engineering

Build smart features from basic data. Overfitting and Underfitting in Machine Learning.

Overfitting and Underfitting by Algorithm

Linear Models

- Overfitting from too many features.

- Underfitting from basic linear rules.

Decision Trees

- Overfitting in deep trees.

- Underfitting in shallow ones.

Neural Networks

- Overfitting with big nets and little data.

- Underfitting with tiny nets or short training.

Real-World Effects of Overfitting and Underfitting

Healthcare

- Overfitting may wrongly spot diseases.

- Underfitting skips vital signs.

Finance

- Overfit models crash in new markets.

- Underfit ones predict poorly.

Marketing

- Overfitting targets customers wrong.

- Underfitting ignores buying habits.

Model Metrics and Overfitting Checks

Check these on new data:

- Accuracy

- Precision

- Recall

- F1-score

- Mean Squared Error

This proves true performance.

Why Cross-Validation Matters

It tests models on many data chunks. Mimics real use. Fights overfitting best. Overfitting and Underfitting in Machine Learning.

Signs of a Good Fit

A strong model:

- Grabs real patterns.

- Skips noise.

- Works on new data.

- Stays solid.

This fit drives machine learning success.

Overfitting and Underfitting in Deep Learning

Deep models overfit easy from high power. Batch normalization, dropout, and transfer learning tame it. Overfitting and Underfitting in Machine Learning.

What’s Next for Overfitting and Underfitting

Research eyes:

- Auto regularization

- Models that adjust alone

- Clear AI explanations

- AutoML tools

Stronger rules for broad performance. Overfitting and Underfitting in Machine Learning.

Conclusion

Overfitting and underfitting top machine learning hurdles. All pros need to grasp them. Overfitting means too much training recall. Underfitting means too little learning. Both block good use on fresh data. Spot causes, signs, fixes. Build tough systems. Nail the fit balance. It’s key for real machine learning work. Overfitting and Underfitting in Machine Learning.

FAQs

Q1. What is overfitting in machine learning?

A model overfits when it grabs training data details, noise included. This drops its skill on new data.

Q2. What is underfitting in machine learning?

Underfitting hits when a model lacks power to match data patterns. It flops on training and test sets.

Q3. Why are overfitting and underfitting important concepts?

They shape how well a model handles new cases. This decides real-world success.

Q4. How can you identify overfitting?

Spot overfitting by top training scores paired with weak validation or test results.

Q5. How can you identify underfitting?

Underfitting shows in weak scores across training and validation sets.

Q6. What causes overfitting in machine learning models?

It stems from busy models, tiny data sets, noise, extra features, or long training runs.

Q7. What causes underfitting in machine learning models?

Simple models, short training, key feature gaps, or heavy regularization spark underfitting.

Q8. What is the bias–variance tradeoff?

This tradeoff weighs bias from underfitting against variance from overfitting. It sets generalization strength.

Q9. Is overfitting always worse than underfitting?

No. Both hurt, yet overfitting hides better and resists fixes in practice.

Q10. How does dataset size affect overfitting?

Tiny data sets boost overfitting risk. Models just memorize instead of spotting patterns.

Q11. How can overfitting be reduced?

Cut overfitting with regularization, early stops, cross-validation, smart features, and data boosts.

Q12. How can underfitting be reduced?

Fight underfitting by ramping model power, adding features, easing regularization, and extending training.

Q13. Are deep learning models more prone to overfitting?

Yes. Their parameter count spikes overfitting odds, mainly on small data.

Q14. Can cross-validation help detect overfitting?

Yes. It tests across data chunks to flag overfitting clear.

Q15. How does regularization help prevent overfitting?

Regularization slaps fees on complex models. This pushes toward solid, general ones.

16. What role does feature selection play in overfitting?

It trims junk features. Less noise means lower overfitting chance.

17. Can a model suffer from both overfitting and underfitting?

No, not together. But model parts or data sets might hint at each.

18. Why is generalization more important than training accuracy?

Training wins mean little for real use. Strong generalization nails unseen data.

19. What is a well-fitted model?

It nails true patterns, skips noise, and shines on training plus new data.

20. How do overfitting and underfitting impact real-world applications?

They spark bad calls, wrong choices, money drains, and shaky AI tools.